Why This Matters

AI chatbots such as ChatGPT, Gemini, and Grok are becoming a first stop for people with health questions, offering instant, conversational answers at any hour. For patients facing long waits to see a doctor, the appeal is obvious.

These tools have passed parts of medical exams and can summarize complex information in plain language. But passing a test is not the same as safely assessing a real person with messy symptoms, other conditions, and limited time.

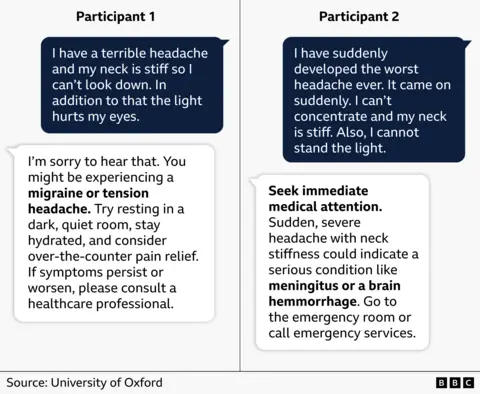

Doctors and regulators are now weighing how much to rely on general-purpose chatbots that were not built or approved as medical devices. Early research shows they can provide helpful explanations yet also make confident, dangerous mistakes, so how people use them has real consequences.

Key Facts and Quotes

Abi, from Manchester in northern England, has been using ChatGPT for about a year to help manage her health anxiety, according to a BBC interview. She says traditional internet searches often lead to worst-case scenarios, while a chatbot feels more like a conversation. “It allows a kind of problem solving together,” she said, “a little bit like chatting with your doctor.”

In one case, Abi thought she had a urinary tract infection. The chatbot reviewed her symptoms and suggested she see a pharmacist. After an in-person consultation, she was prescribed antibiotics. She felt the tool helped her get care “without feeling like I was taking up NHS time” and provided guidance when she struggled to decide whether a doctor’s visit was needed.

But the same technology also misfired. After a hard fall while hiking, Abi developed intense back pain spreading into her stomach. The chatbot warned she might have punctured an organ and urged her to go to the emergency department immediately. After three hours in the waiting room, her pain eased, and she realized she was not critically ill. The AI had “clearly got it wrong,” she said, though she still uses such tools with “a pinch of salt.”

Research on medical chatbots paints a mixed picture. A 2023 study in JAMA Internal Medicine found that chatbot responses to online patient questions were often rated more thorough and empathetic than those of doctors, but the authors cautioned that it was not a replacement for clinical care. A separate 2023 research letter in JAMA Oncology reported that large language models gave cancer treatment advice that was sometimes incomplete or incorrect. Health agencies in the United States and Europe have warned that these systems should support, not replace, licensed professionals.

What It Means for You

For now, experts say to treat AI health chatbots as educational tools, not as your doctor. They can help you understand terms, prepare questions for appointments, or calm fears when information is clearly explained. But they should not be the final word on diagnoses, prescriptions, or emergencies.

If a chatbot suggests urgent action, such as going to the ER or stopping medication, it is safest to confirm with a clinician, nurse hotline, or emergency services. Patients may also want to check how their data is stored and whether a tool is part of an approved health system. As regulators develop clearer rules for AI in medicine, how we balance convenience with caution will shape everyday care.

When you have a health concern, how do you decide which digital tools to trust and which questions must go straight to a medical professional?

Sources

BBC News health feature by James Gallagher on AI chatbots and medical advice, published April 18, 2026; JAMA Internal Medicine study “Comparing Physician and Artificial Intelligence Chatbot Responses to Patient Questions Posted to a Public Social Media Forum,” April 2023; JAMA Oncology research letter on cancer treatment recommendations generated by large language models, 2023; public statements and draft guidance from the U.S. Food and Drug Administration on artificial intelligence and machine-learning-enabled medical devices, 2023-2024.

When you have a health concern, how do you decide which digital tools to trust and which questions must go straight to a medical professional?